When the Machine Is Usually Right

The real risk is not just AI errors, it is that many users cannot verify when the system is wrong

By Adam Stein (& ChatGPT, Gemini, NotebookLM)

An office worker pastes a contract clause into an AI assistant and asks whether it is enforceable. The system produces a confident explanation, complete with citations to legal precedent. A small notice warns that the tool can make mistakes and should be verified with a professional. The worker skims the answer and sends an email based on it anyway.

Interactions like this are becoming routine.

Artificial intelligence is often criticized for producing errors: fabricated citations, broken code, or confidently wrong explanations. But the more important risk may be what it does to the people using it. The growing catalog of what people now call “AI slop” has become a familiar complaint in classrooms, workplaces, and online forums. The usual conclusion follows naturally: large language models are unreliable. That conclusion is understandable, but it does not reflect the actual problem.

The real risk is not that AI systems sometimes generate incorrect answers, it is that the humans using those systems are not checking their work. For decades, researchers studying safety‑critical industries have documented a behavioral pattern known as automation bias. When people work with automated systems that perform reliably most of the time, they gradually reduce their own vigilance. They verify less. They question less. And when the system eventually fails, they become less likely to detect the mistake.

Until recently, automation bias was most visible in expert environments using specialized tools. In some clinical studies, physicians have even changed correct diagnoses to incorrect ones after consulting automated decision-support systems—a well-documented example of automation bias in clinical decision-making. Pilots have followed incorrect cockpit alerts despite contradictory instrument readings, a pattern documented in studies of automation bias in aviation decision support systems. Military operators have accepted automated threat classifications even when other evidence suggested the system was wrong. In each case the system usually worked until the moment it didn’t, and the human operator had already stopped questioning it. Automation improved performance on average, but introduced a predictable category of error: humans trusting the machine too much.

Versions of this behavior have appeared in everyday technology before. Early consumer GPS systems occasionally sent drivers down the wrong road, or in a few well-publicized cases into lakes or dead-end roads, because digital maps were incomplete. The problem was not that the navigation system occasionally made mistakes; no map is perfect. The problem was that some drivers assumed the system must be right, even when the route clearly did not make sense to a human paying attention. Artificial intelligence changes the scale of that dynamic, bringing this pattern into everyday decision‑making.

In effect, AI has democratized automation bias, extending what was once a problem in expert systems into domains where users cannot tell when the system is wrong. Artificial intelligence has effectively placed a cognitive assistant into millions of hands. Ordinary people are asking for investment advice, writing essays, or building software they can’t understand—what developers have taken to calling “vibe coding”. In each case, people are operating in domains where they may have little underlying expertise, or where the volume of work has outpaced their capacity for careful review.

Consider a hiring manager reviewing hundreds of job applications. Instead of reading each one carefully, they ask an AI system to summarize the candidates and rank them. The summaries are plausible, and the rankings feel systematic. But over time, the manager reads fewer applications themselves and becomes less certain what a strong candidate actually looks like. If the system misinterprets a resume, overlooks an unusual career path, or invents a detail that was never there, the manager may not recognize the mistake. The automation has not simply accelerated the task; it has quietly replaced the manager’s independent judgment.

When automation is used under conditions where the user lacks verification capacity, two distinct failure modes appear. The first is that the user does not know what a good output should look like. If an AI system produces legal language, technical analysis, or complex code, the user may not have the background knowledge required to judge whether the result makes sense. The second is more subtle: even when something goes wrong, the user may not know how to recognize that a failure has occurred, or how to diagnose it.

The first failure is straightforward but easy to underestimate. If a person lacks a mental model of what a sound answer looks like, they cannot meaningfully judge whether the output is strong, weak, or subtly flawed. A polished response can therefore create the illusion of competence. The user sees fluency, structure and confidence, but has no independent basis for deciding whether those qualities reflect genuine correctness.

The second failure is different and in some ways more consequential. Here the user is not merely unable to rank the quality of the output; they may not even know that a mistake has been made. The error leaves no obvious trace. Nothing feels wrong, so nothing triggers review. In that situation, automation does not just influence judgment. It suppresses the very signal that would tell a person their judgment should be activated.

In expert settings, automation bias is dangerous but somewhat bounded because experts retain the underlying skills needed to detect errors. A physician can usually recognize when a recommendation is clinically implausible. A pilot can cross-check instruments and procedures. Experts also have internal standards against which they judge automated outputs. For many everyday AI users, that safeguard does not exist.

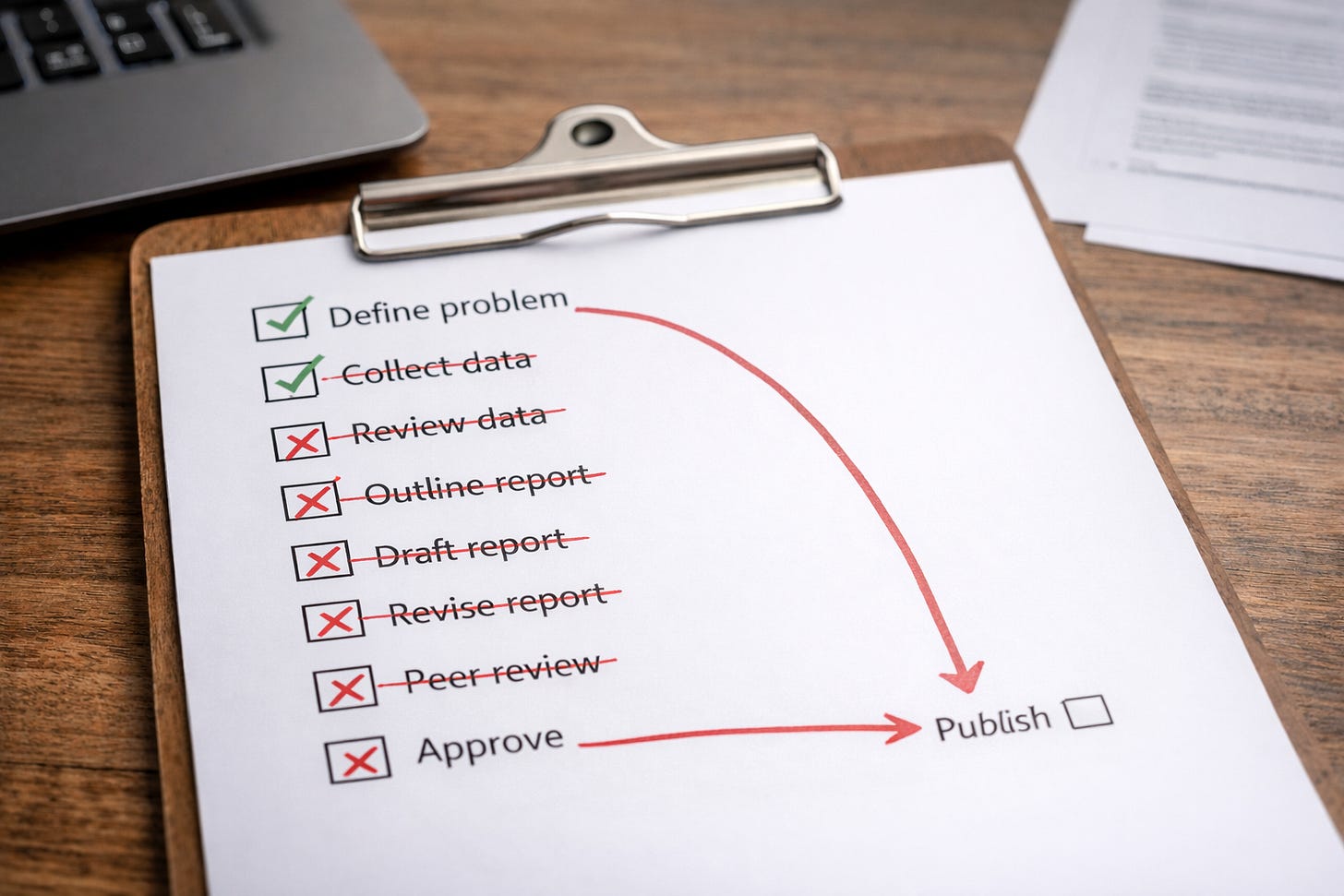

When people operate in domains where they lack expertise, they often substitute surface signals for real evaluation. A fluent summary feels reliable because it reads well. A ranking generated by software feels objective because it was produced by an algorithm. These cues resemble evidence of quality, but they are only proxies. Without an independent standard for judging correctness, those proxies quietly replace genuine verification. The same mechanism that causes a hiring manager to trust a flawed ranking causes a writer to accept a first AI draft as though revision were unnecessary. Anyone who has written a paper understands that a rough draft is followed by editing and iteration. That feedback loop is often missing when people use AI tools, sometimes because users do not realize it should exist at all.

A student using AI to summarize research papers may not recognize fabricated citations. A small business owner automating spreadsheets may not fully understand the logic embedded in formulas the model produced. Someone relying on AI-generated code or analysis may not know how to verify whether the results are correct. When verification capacity disappears, automation bias changes form: instead of experts trusting machines too much, non‑experts begin outsourcing cognition entirely. The workflow becomes simple: ask the model, receive an answer that appears plausible, move on.

Systems that are correct most of the time can still degrade decision quality by training users to trust them too much. Research on automation bias shows that systems performing correctly most of the time can still degrade decision quality. Reliable automation gradually trains users to treat outputs as authoritative rather than provisional. The remaining errors slip through precisely because people have stopped checking. What AI adds is scale. It lowers the barrier to attempting complex tasks while producing outputs that are fluent enough to anchor judgement even when they are wrong.

Paradoxically, improvements in system reliability can make the effect worse. Consistent success encourages what researchers sometimes call learned carelessness: users reduce scrutiny because past experience suggests scrutiny is unnecessary. Artificial intelligence amplifies this dynamic in two ways at once—it dramatically lowers the barrier to attempting complex tasks, and it produces outputs fluent and plausible enough to anchor human judgment even when they are wrong. The result is a category of failure that looks like a technology problem but is fundamentally a human one.

Public debate about AI risks tends to focus on the models themselves: hallucinations, training data limitations, or alignment failures. Those concerns are real. But focusing exclusively on model errors overlooks the human side of the equation. The more consequential question is what happens to human cognition when automated reasoning tools become ubiquitous. History suggests a clear answer: people adapt. They adapt by delegating parts of their reasoning process to machines, or by trusting outputs that appear credible. And over time, they adapt by investing less effort in verifying results that are usually correct.

Return to the example of early GPS navigation. Over time, both the technology and its users improved. Maps became more accurate, and people learned when to trust directions and when to question them. But the underlying tendency did not disappear. It adapted. This behavior is not irrational—in many contexts, it is an efficient response to cognitive cost, since verifying every AI output can easily take longer than generating it in the first place. But efficiency and reliability are not the same thing.

As AI systems move deeper into education, workplaces, and professional practice, and increasingly into research, engineering, and policy analysis, the central challenge will not simply be improving model accuracy. It will be preserving the human capacity, and the institutional expectation, of independent verification. Safety‑critical industries learned this lesson long ago. Automation not only changes what machines can do; it changes how humans think and how carefully they check their own decisions.

What AI has done is extend that familiar human tendency into domains where many users lack the expertise to verify the results. The real risk of AI is not that machines will make mistakes. It is that, as we grow accustomed to relying on them, we may become less likely to notice when they do.